An AI-based computer system can gather data and use that data to make decisions or solve problems – using algorithms to perform tasks that, if done by a human, would be said to require intelligence. The benefits created by AI and machine learning (ML) systems for better health care, safer transportation, and greater efficiencies across the globe are already happening. But the increased amounts of data and computing power that enable sophisticated AI and ML models raise questions about the privacy impacts, ethical consequences, fairness, and real world harms if the systems are not designed and managed responsibly. FPF works with commercial, academic, and civil society supporters and partners to develop best practices for managing risk in AI and ML and assess whether historical data protection practices such as fairness, accountability, and transparency are sufficient to answer the ethical questions they raise.

Featured

China’s Interim Measures for the Management of Generative AI Services: A Comparison Between the Final and Draft Versions of the Text

Authors: Yirong Sun and Jingxian Zeng Edited by Josh Lee Kok Thong (FPF) and Sakshi Shivhare (FPF) The following is a guest post to the FPF blog by Yirong Sun, research fellow at the New York University School of Law Guarini Institute for Global Legal Studies at NYU School of Law: Global Law & Tech […]

FPF Statement on the adoption of the EU AI Act

“Today the European Union adopted the EU AI Act at the end of a long and intense legislative process. At the Future of Privacy Forum we believe that multistakeholder global approaches and advancing common understanding in the area of AI governance are key to ensuring a future with safe and trustworthy AI, one that protects […]

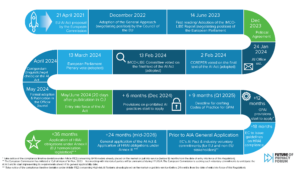

FPF and OneTrust Release Collaboration on Conformity Assessments under the proposed EU AI Act: A Step-by-Step Guide & Infographic

Today, the Future of Privacy Forum (FPF) and OneTrust released a collaboration on Conformity Assessments under the proposed EU AI Act: A Step-by-Step Guide and accompanying Infographic. Conformity Assessments are a key and overarching accountability tool introduced in the proposed EU Artificial Intelligence Act (EU AIA or AIA) for high-risk AI systems. Conformity Assessments are […]

How Data Protection Authorities are De Facto Regulating Generative AI

The Istanbul Bar Association IT Law Commission published Dr. Gabriela Zanfir-Fortuna’s article, “How Data Protection Authorities are De Facto Regulating Generative AI,” in their August monthly AI Working Group Bulletin, “Law in the Age of Artificial Intelligence” (Yapay Zekâ Çağinda Hukuk). Generative AI took the world by storm in the past year, with services like […]

Insights into Brazil’s AI Bill and its Interaction with Data Protection Law: Key Takeaways from the ANPD’s Webinar

Authors: Júlia Mendonça and Mariana Rielli The following is a guest post to the FPF blog by Júlia Mendonça, Researcher at Data Privacy Brasil, and Mariana Rielli, Institutional Development Coordinator at Data Privacy Brasil. The guest blog reflects the opinion of the authors only. Guest blog posts do not necessarily reflect the views of FPF. […]

Unveiling China’s Generative AI Regulation

Authors: Yirong Sun and Jingxian Zeng The following is a guest post to the FPF blog by Yirong Sun, research fellow at the New York University School of Law Guarini Institute for Global Legal Studies at NYU School of Law: Global Law & Tech and Jingxian Zeng, research fellow at the University of Hong Kong […]

AI Verify: Singapore’s AI Governance Testing Initiative Explained

In recent months, global interest in AI governance and regulation has expanded dramatically. Many identify a need for new governance and regulatory structures in response to the impressive capabilities of generative AI systems, such as OpenAI’s ChatGPT and DALL-E, Google’s Bard, Stable Diffusion, and more. While much of this attention focuses on the upcoming EU […]

ETSI’s consumer IoT cybersecurity ‘conformance assessments’: parallels with the AI Act

In early September 2021, the European Telecommunications Standards Institute (ETSI) published its European Standard to lay down baseline cybersecurity requirements for Internet of Things (IoT) consumer products (ETSI EN 303 645 V2.1.1). The Standard is a recommendation to manufacturers to develop IoT devices securely from the outset. It also provides an internationally recognized benchmark – […]

Introduction to the Conformity Assessment under the draft EU AI Act, and how it compares to DPIAs

The proposed Regulation on Artificial Intelligence (‘proposed AIA’ or ‘the Proposal’) put forward by the European Commission is the first initiative towards a comprehensive legal framework on AI in the world. It aims to set rules on specific AI applications in certain contexts and does not intend to regulate AI technology in general. The proposed […]

FPF at CPDP LatAm 2022: Artificial Intelligence and Data Protection in Latin America

This summer the first-ever in-person Computers, Privacy and Data Protection Conference – Latin America (CPDP LatAm) took place in Rio de Janeiro on July 12 and 13. The Future of Privacy Forum (FPF) was present at the event, titled Artificial Intelligence and Data Protection in Latin America, participating in two panels and submitting a paper […]