An AI-based computer system can gather data and use that data to make decisions or solve problems – using algorithms to perform tasks that, if done by a human, would be said to require intelligence. The benefits created by AI and machine learning (ML) systems for better health care, safer transportation, and greater efficiencies across the globe are already happening. But the increased amounts of data and computing power that enable sophisticated AI and ML models raise questions about the privacy impacts, ethical consequences, fairness, and real world harms if the systems are not designed and managed responsibly. FPF works with commercial, academic, and civil society supporters and partners to develop best practices for managing risk in AI and ML and assess whether historical data protection practices such as fairness, accountability, and transparency are sufficient to answer the ethical questions they raise.

Featured

Colorado Enacts First Comprehensive U.S. Law Governing Artificial Intelligence Systems

On May 17, Governor Polis signed the Colorado AI Act (CAIA) (SB-205) into law, establishing new individual rights and protections with respect to high-risk artificial intelligence systems. Building off the work of existing best practices and prior legislative efforts, the CAIA is the first comprehensive United States law to explicitly establish guardrails against discriminatory outcomes […]

FPF Responds to the OMB’s Request for Information on Responsible Artificial Intelligence Procurement in Government

On April 29, the Future of Privacy Forum submitted comments to the Office of Management and Budget (OMB) in response to the agency’s Request for Information (RFI) regarding responsible procurement of artificial intelligence (AI) in government, particularly regarding the intersection of AI tools and systems procurement with other risks posed by the development and use […]

FPF Develops Checklist & Guide to Help Schools Vet AI Tools for Legal Compliance

FPF’s Youth and Education team has developed a checklist and accompanying policy brief to help schools vet generative AI tools for compliance with student privacy laws. Vetting Generative AI Tools for Use in Schools is a crucial resource as the use of generative AI tools continues to increase in educational settings. It’s critical for school […]

China’s Interim Measures for the Management of Generative AI Services: A Comparison Between the Final and Draft Versions of the Text

Authors: Yirong Sun and Jingxian Zeng Edited by Josh Lee Kok Thong (FPF) and Sakshi Shivhare (FPF) The following is a guest post to the FPF blog by Yirong Sun, research fellow at the New York University School of Law Guarini Institute for Global Legal Studies at NYU School of Law: Global Law & Tech […]

FPF Submits Comments to the Office of Management and Budget on AI and Privacy Impact Assessments

On April 1, 2024, the Future of Privacy Forum filed comments to the Office of Management and Budget (OMB) in response to the agency’s Request for Information on how privacy impact assessments (PIAs) may mitigate privacy risks exacerbated by AI and other advances in technology. The OMB issued the RFI pursuant to the White House’s […]

FPF Statement on Vice President Harris’ announcement on the OMB Policy to Advance Governance, Innovation, and Risk Management in Federal Agencies’ Use of Artificial Intelligence

Following the groundbreaking White House Executive Order on AI last fall, which outlined ambitious goals to promote the safe, secure, and trustworthy use and development of AI systems, Vice President Harris has today announced the publication by the Office of Management and Budget of a binding memorandum on “Advancing Governance, Innovation, and Risk Management for […]

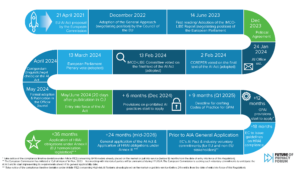

FPF Statement on the adoption of the EU AI Act

“Today the European Union adopted the EU AI Act at the end of a long and intense legislative process. At the Future of Privacy Forum we believe that multistakeholder global approaches and advancing common understanding in the area of AI governance are key to ensuring a future with safe and trustworthy AI, one that protects […]

FPF Awarded NSF and DOE Grants to Advance White House Executive Order on Artificial Intelligence

The Future of Privacy Forum (FPF) has been awarded grants by the National Science Foundation (NSF) and the Department of Energy (DOE) to support FPF’s establishment of a Research Coordination Network (RCN) for Privacy-Preserving Data and Analytics. FPF’s work will support the development and deployment of Privacy Enhancing Technologies (PETs) for socially beneficial data sharing […]

FPF Joins the NIST Artificial Intelligence Safety Consortium

The Future of Privacy Forum (FPF) is collaborating with the National Institute of Standards and Technology (NIST) in the U.S. Artificial Intelligence Safety Institute Consortium to develop science-based and empirically backed guidelines and standards for AI measurement and policy, laying the foundation for AI safety across the world. This initiative will help prepare the U.S. […]

FPF Announces International Technology Policy Expert as New Head of Artificial Intelligence

FPF has appointed international technology policy expert Anne J. Flanagan as Vice President for Artificial Intelligence (AI). In this new role, Anne will lead the privacy organization’s portfolio of projects exploring the data flows driving algorithmic and AI products and services, their opportunities and risks, and the ethical and responsible development of this technology. Anne […]